Working paper coming soon!

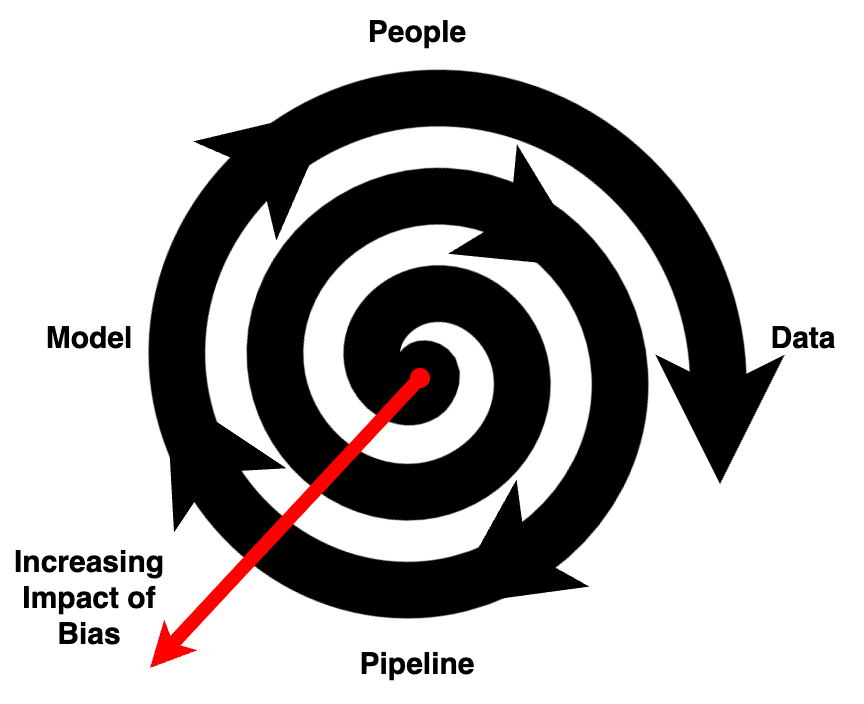

The planning toolkit is constantly evolving, both in the sources and methods available to planners. As large-scale data, primarily from online materials, become more ubiquitous, researchers and practitioners look to computer-based methods to process and analyze scales of information too large for traditional manual techniques. Artificial Intelligence (AI) tools offer a solution to this problem, especially as they become easier to use by laypersons outside of computer science disciplines. However, often these tools are black box solutions, meaning the inner workings of what data was used to create the model and how it makes decisions are opaque to the user. When biases are present in the underlying people, data, algorithms, or modeling specifications, they are then perpetuated through to the solution presented to the user. Previous representations of the impact of bias in a system have represented it as a linear path from input to output or as a feedback loop showing how solutions come back to impact the people for which the solution is built. However, these representations fail to capture that AI tools that start from biased representations of people provide biased solutions that amplify the inequalities that created the data to start with. The effects of these solutions then become data inputs to new AI models, and the process propagates with increasing amplification of the bias. This paper therefore introduces the bias spiral, a representation that shows how solutions that take in bias through any combination of the underlying historical biases (people), misrepresented sampling (data), normative research design choices (pipeline), or feature selection (model) and apply those results back to people can perpetuate systems of harmful inequities. In turn, those are fed into the next generation of models and as the cycle continues, the impact of the bias on people increases. In this commentary, we argue that for planners, this is especially problematic as solutions from AI models have direct implications for people’s lives, and offer a checklist for the mitigation of bias at every stage to ensure the ethical use of AI in social science research.